Conversational Analytics | What the Data Says About Accuracy

Conversational analytics (CA) — ask a question in English, get a chart back — is one of the most heavily marketed applications of large language models to enterprise data. Every vendor promises “natural-language access to your data,” but the gap between a polished demo and a production-ready system is large, and most accuracy claims in the wild are anecdotal or selected from favorable conditions. I wanted a more systematic answer to a basic question: how accurate is CA, really, and what can a data team actually do to improve it?

To answer this, I built a benchmark that varies three factors independently — the richness of the semantic layer provided to the model, the number of example queries included in the prompt, and the SQL complexity required to answer the question — and ran it against a small but realistic SaaS e-commerce schema. The project had three overall goals:

- I designed a controlled benchmark of 100 hand-written natural-language questions paired with validated ground-truth SQL queries, stratified across four levels of SQL complexity. The benchmark, seed data, prompts, and analysis scripts are available in the nl-sql-multitable repository.

- I evaluated the contributions of the semantic layer and example shots by sweeping each factor across multiple levels and measuring how each interacts with query complexity. The full sweep produced 6,000 model calls.

- I translated the results into actionable recommendations for the data engineers, analytics engineers, and product designers building these systems, as well as for the end users posing the questions.

Key Takeaways

The benchmark suggests that CA accuracy is not a matter of luck or vendor magic. It is a predictable function of three factors that practitioners already control.

- Semantic models work. A detailed semantic layer is enough to teach the model the conventions of the schema. Adding a curated set of 10–20 example queries can provide small additional improvements.

- The data model sets the ceiling. SQL complexity dominated every other factor in this benchmark. Investments in views, marts, and denormalized rollups that collapse joins and eliminate subqueries for common questions are the single highest-leverage thing a data team can do for CA accuracy.

- Ambiguity is a UX problem, not a prompting problem. Vague questions remain low-accuracy regardless of how rich the prompt is. Clarification flows, surfaced interpretations, and inline hints are the right place to address them.

The table below summarizes the accuracy a team can reasonably expect under each condition, assuming a moderate semantic layer or roughly 20 examples are provided.

| Condition | Expected accuracy |

|---|---|

| Single-table, clear question | 90–100% |

| Two-table join, clear question | 80–85% |

| Subquery / CTE / window, clear question | 20–35% |

| Any tier, ambiguous question | 30–45% |

The full code, seed data, prompts, and analysis are available at github.com/purcelba/nl-sql-multitable.

Data Overview

The benchmark uses three normalized tables — customers, products, and orders — representing a small SaaS e-commerce business. Column names are intentionally opaque (e.g., dt for order date, price stored as integer cents, refunded as a 0/1 flag) so that a bare DDL prompt is a genuinely hard baseline and the semantic layer has something meaningful to add.

The 100 test questions are stratified across four complexity tiers, with 25 questions per tier:

- Single-table — one table only, with filters and aggregates.

- Two-join — exactly two tables joined.

- Three-join — all three tables joined.

- Complex — three-join plus at least one of: subquery, CTE, window function, or HAVING clause with an aggregated filter.

Each question was paired with a ground-truth SQL query that I validated against the seeded database. An additional 13 “vague variant” rewrites were authored to test the impact of question ambiguity (more on this below).

Methods

For each model call, the prompt was constructed from one of four semantic-layer packages and one of five example-shot counts. The semantic-layer levels are nested:

| Level | Contents |

|---|---|

| L0 | Bare DDL only — opaque column names, no context |

| L1 | L0 plus a column glossary with plain-English labels |

| L2 | L1 plus field descriptions, allowed values, and units |

| L3 | L2 plus business rules, join guidance, and common conventions (e.g., “prices are cents”, “recent means 30 days”) |

Example shot counts were 0, 10, 20, 30, or 40 question-and-SQL pairs, stratified so that each count included examples from every complexity tier. Shot examples were disjoint from the test set.

I used Claude Haiku 4.5 at temperature 0 with three trials per condition. The full design — 100 questions × 4 semantic-layer levels × 5 shot counts × 3 trials — produced 6,000 calls.

I scored each generated query on two metrics. Execution rate measures whether the SQL runs at all. Result-set accuracy measures whether the output of the generated query matches the output of the ground-truth query, compared as a column-name-agnostic sorted tuple. Result-set accuracy is the headline metric throughout.

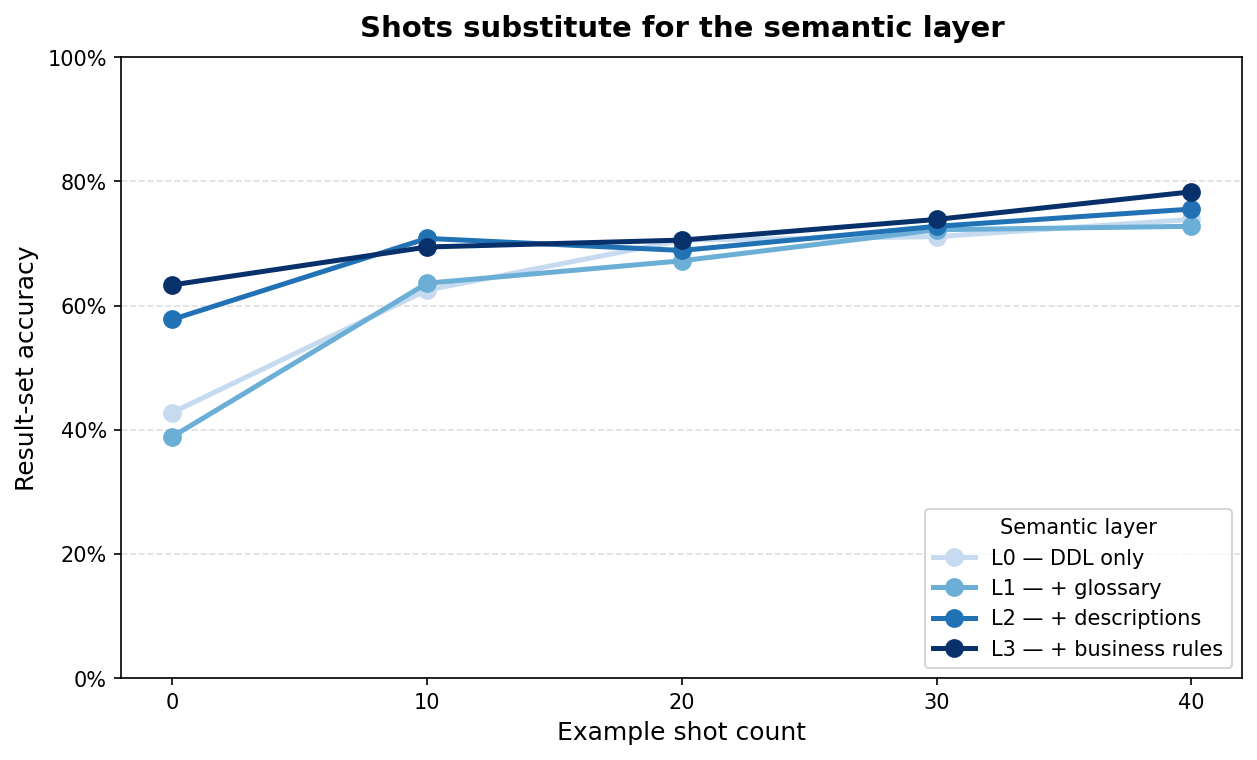

The Semantic Layer and Example Shots are Substitutes

The first result was less expected and is the most practically useful. A rich semantic layer helps when there are no examples in the prompt. Examples help when the semantic layer is bare. But once either factor is provided at strength, the other contributes little additional accuracy.

Figure 1. Accuracy by shot count, with one line per semantic-layer level. Lines are well-separated at 0 shots and converge by 20–40 shots.

Averaged across all 100 questions:

| 0 shots | 20 shots | 40 shots | |

|---|---|---|---|

| L0 | 42.8% | 70.6% | 73.9% |

| L3 | 63.3% | 70.6% | 78.3% |

At 0 shots the L0–L3 gap is 20 points and a dense semantic layer is clearly carrying the prompt. By 20 shots the gap is gone. By 40 shots it is 4 points. An interesting inversion is that 40 shots with bare DDL (73.9%) outperforms a rich semantic layer with no examples (63.3%).

Both channels appear to do the same job through different mechanisms. The semantic layer tells the model in prose that price is in cents, that refunded is a boolean, and that joins go in a particular direction. Examples show the model SQL in which these conventions are already reflected. Either channel is sufficient to teach the model the house style, and running both at full volume is largely redundant.

In practical terms, this means a team can pick whichever investment is cheaper to maintain. Teams with a stable set of historical example queries — dashboard definitions, BI tickets, analyst notebooks — can lean on shots, which are easy to refresh, version, and review. Teams with a strong data governance function producing a semantic layer can lean on it, since it doubles as documentation. Teams with neither are best served by curating 10–20 well-chosen examples; that is the cheapest path to the 70% accuracy band for non-complex queries.

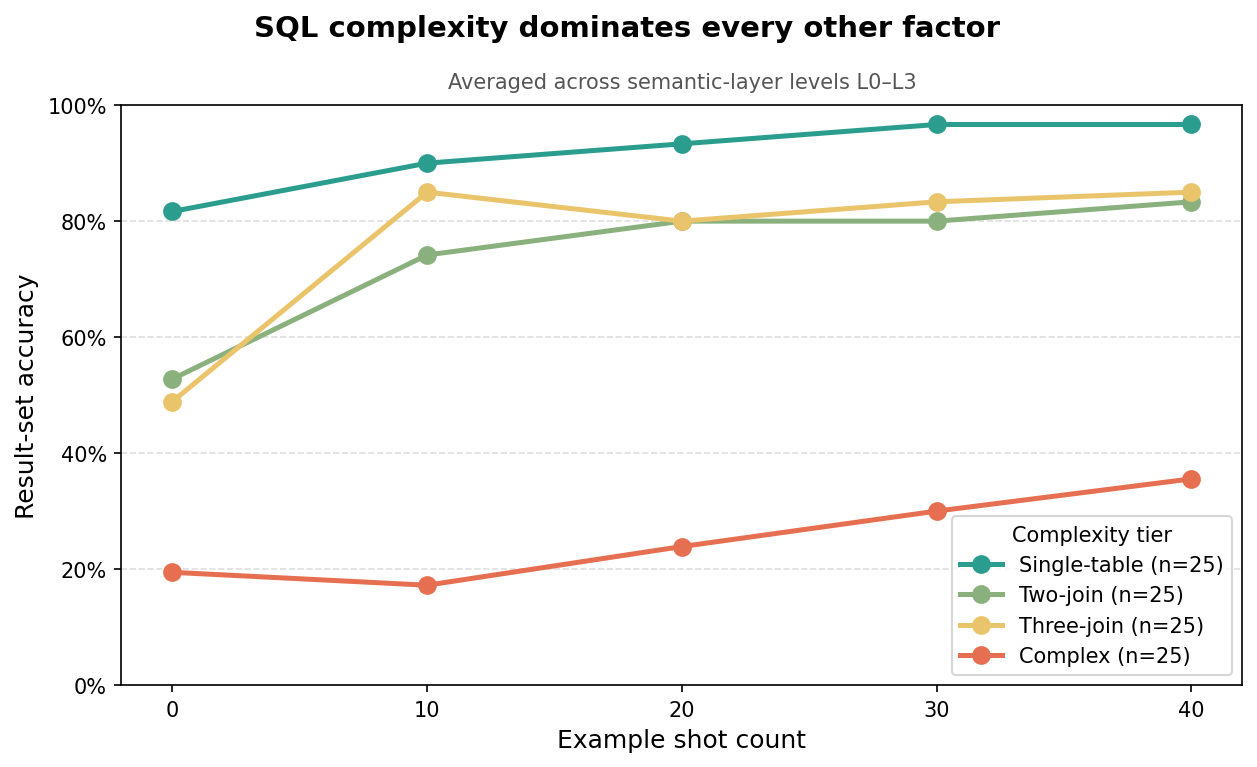

SQL Complexity is the Dominant Factor

The single most important factor determining whether CA produces a correct answer is how hard the underlying SQL is. Not the semantic layer. Not examples. How many joins and how much nested logic the question requires.

Figure 2. Result-set accuracy by complexity tier, averaged across semantic-layer levels L0–L3. The gap between tiers is much larger than the gap between semantic-layer or shot conditions.

The table below shows accuracy for each tier across semantic-layer levels at 0 shots, plus the L3 + 20-shot condition as a reasonable “well-invested” endpoint.

| Tier | L1, 0-shot | L2, 0-shot | L3, 0-shot | L3 + 20 shots |

|---|---|---|---|---|

| Single-table | 73.3% | 86.7% | 86.7% | 93.3% |

| Two-join | 22.2% | 76.7% | 80.0% | 80.0% |

| Complex | 20.0% | 13.3% | 24.4% | 22.2% |

Three observations are worth calling out.

First, single-table accuracy is high even at the minimal L1 + 0-shot prompt (73%), and reaches 93% with a rich semantic layer and a handful of examples. CA use cases that involve filters and aggregates over a single table or a pre-built mart are essentially a solved problem.

Second, two-join queries respond strongly to the semantic layer. The L1 → L2 jump at 0 shots is 55 points. This is the regime where business rules and join guidance earn their keep — the model needs to know which key to join on, which direction the join goes, and where filters belong.

Third, the complex tier never moves out of the 13–33% band. No combination of semantic layer and shots that I tested rescues queries requiring subqueries, CTEs, or window functions.

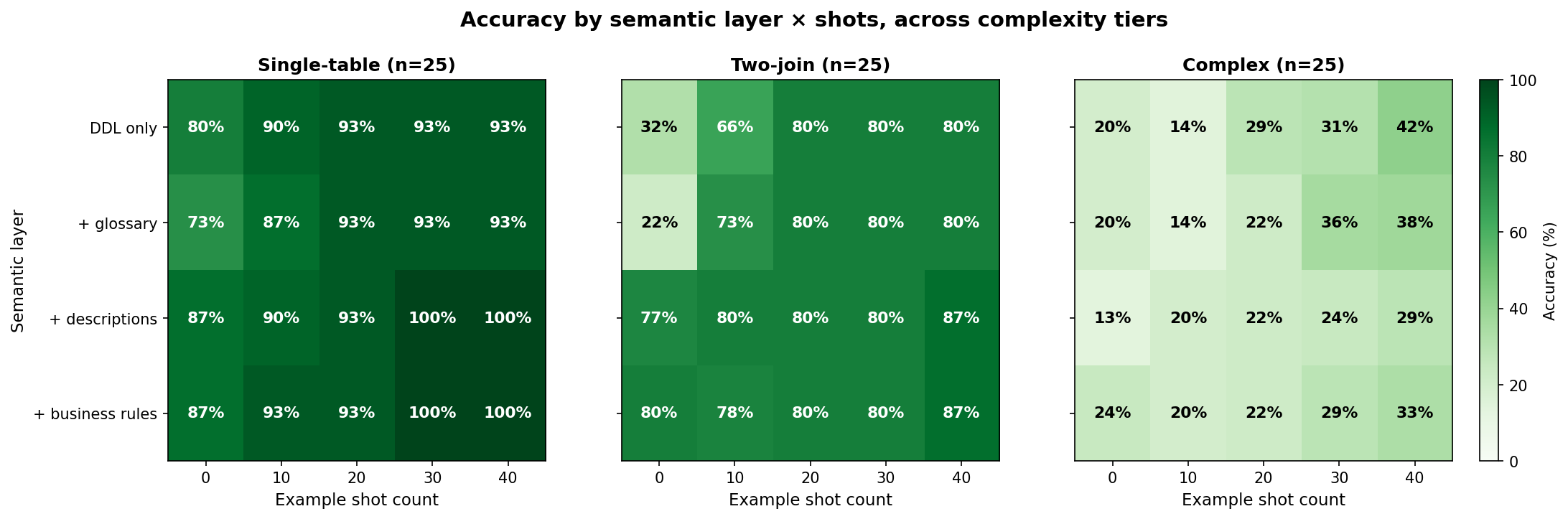

Figure 3. Accuracy heatmap for complex queries only. The surface is flat and low across all combinations of semantic layer and shots.

The practical implication is that complexity should be addressed in the data model, not in the prompt. Every join that can be collapsed into a view, every subquery that can be pre-materialized into a mart table, and every window function that can be replaced with a denormalized rollup turns a query the model gets wrong into one it gets right. This is the most leveraged investment a data team can make for CA accuracy, and it is entirely within their control.

Question Ambiguity is a Separate Tax

The third result is about the user, not the data. Each question in the benchmark was hand-tagged for ambiguity on a three-level scale. Low-ambiguity questions are fully specified (e.g., “total revenue from hardware in Q3 2025”). Medium-ambiguity questions involve one interpretive choice (e.g., “refund rate” could be count-based or revenue-based). High-ambiguity questions require domain inference (e.g., “self-serve customers” when the schema only has a sales-rep field that is sometimes NULL). Of the 113 questions including the vague variants, 85 are low, 25 are medium, and only 3 are high, so the comparison below focuses on low versus medium.

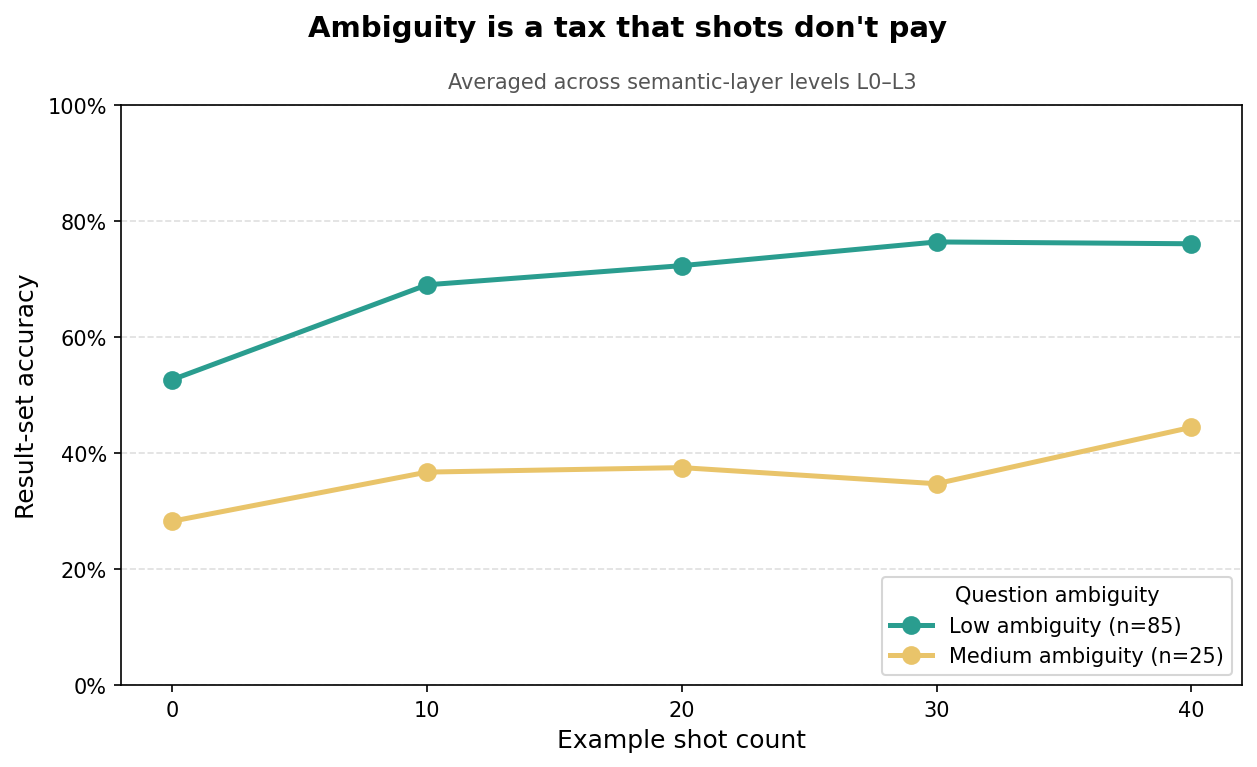

Figure 4. Accuracy by shot count, split by question ambiguity. The gap between low and medium ambiguity is roughly 35 points and does not close as more examples are added.

Averaged across all shot counts:

| Tag | n | L1 | L2 | L3 | Mean |

|---|---|---|---|---|---|

| Low | 85 | 65.6% | 70.8% | 73.5% | 69.3% |

| Medium | 25 | 33.5% | 40.0% | 41.0% | 36.3% |

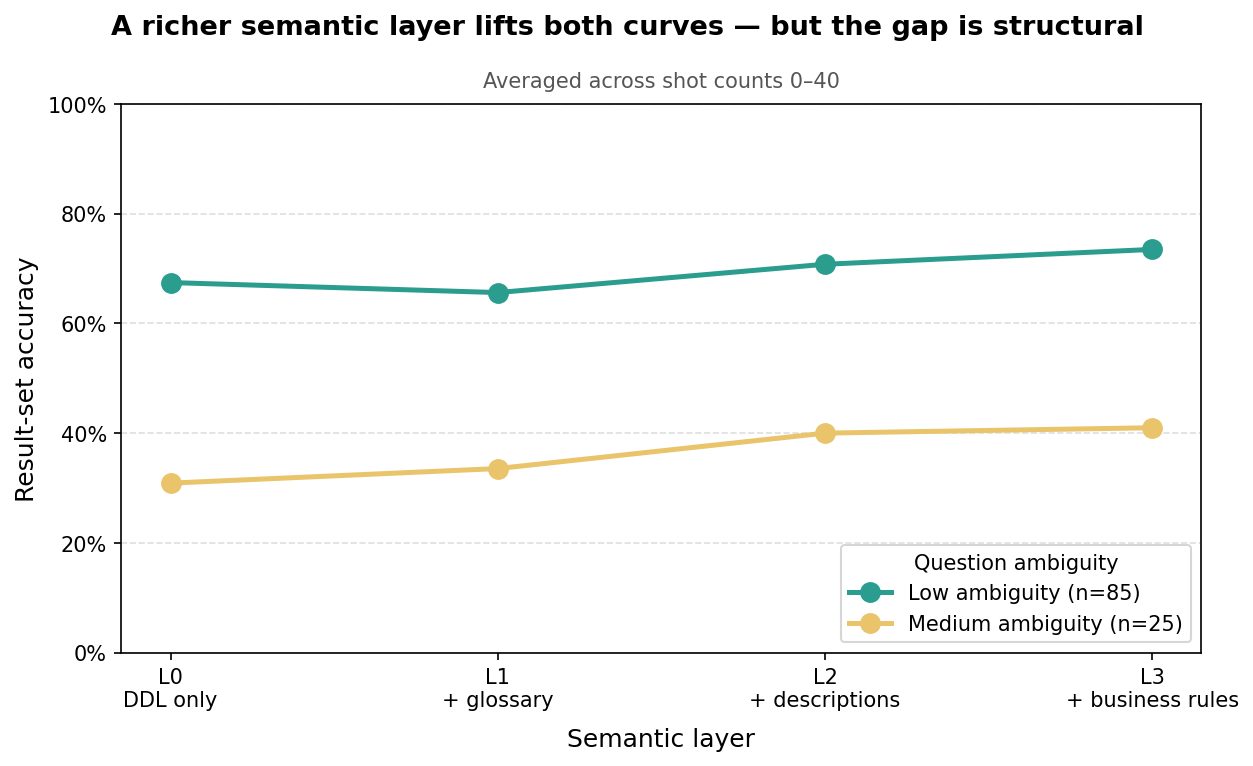

Two patterns are worth noting. The semantic layer does help ambiguous questions in absolute terms — the L1 → L3 lift is roughly 8 points for both groups — and L3 business rules can resolve some interpretive ambiguity (e.g., spelling out that “refund rate” = refunded_orders / total_orders). But ambiguous questions never catch up. Even with an L3 semantic layer and 40 shots, they plateau around 40%, well below their clear counterparts.

Figure 5. Accuracy by semantic-layer level, split by ambiguity. A richer semantic layer lifts both curves, but the gap between them is structural.

This is fundamentally a semantic problem rather than a data-infrastructure problem. The system cannot know whether “most popular product” means the most orders, the most units sold, or the most revenue. No semantic layer can resolve that — only the user can. The right place to address ambiguity is the product surface: detecting ambiguous questions before running them and asking for clarification, surfacing the interpretation the system made alongside the answer so the user can correct it in one click, and providing inline hints that teach users to be specific. A thoughtful clarification UX will outperform any amount of additional semantic modeling for this class of questions.